News Round-Up: EU Message Scanning Persists; UK Court OKs Facial Recognition; U.S. Pushes Operating System Age Checks

Twice a week, the editorial team of Freedom Research compiles a round-up of news that caught our eye – or what felt like under-reported aspects of news deserving more attention.

Over the past few days, the following topics attracted our attention:

London Police Win Court Battle Over Facial Recognition Systems

The U.S. Plans Age Verification at the Operating System Level

Tech Companies Continue Scanning Private Messages Despite Regulation Expiry

London Police Win Court Battle Over Facial Recognition Systems

Privacy advocates in the UK lost a case in the High Court in which they sought to prevent or restrict the London Police’s use of real-time facial recognition technology. The High Court has now rejected claims that, by scanning everyone’s faces in public places, the Met Police have violated human rights and privacy, reports the BBC.

The complaint was filed by youth worker Shaun Thompson and Silkie Carlo, director of the campaign group Big Brother Watch. They argued that facial recognition could be used arbitrarily or in a discriminatory manner, particularly against ethnic minorities. The lawyers also argued that facial recognition cameras make it impossible for people to move about without their biometric data being collected and processed. The plaintiffs argued that the system violates the right to privacy established in the European Convention on Human Rights, as well as the rights to freedom of expression and assembly. In the plaintiffs’ view, the technology gives the police too much discretion and has a negative impact on the willingness to protest.

Thompson is, in fact, one of those who has suffered from a false positive result by facial recognition technology. In February 2024, the London police detained him, mistaking Thompson for his brother, who was a suspect in a crime. Thompson describes this experience as frightening and unfair, because although he was cooperative, his bank cards and passport were not enough to convince the police that the facial recognition technology had made a mistake. He has vowed to appeal the current court ruling.

The High Court, however, found that “the risk and potential scope of racial discrimination was only weakly alleged” and that Thompson’s and Carlo’s human rights had not been violated. Thus, the British High Court essentially gave the police permission to continue using real-time facial recognition technology. Police Commissioner Mark Rowley, of course, praised the court’s decision, calling it a huge victory for public safety. He added: “The courts have confirmed our approach is lawful. The public supports its use. It works. And it helps us keep Londoners safe.” The Commissioner believes that the question is no longer whether the police should use facial recognition, but why they shouldn’t.

Police Minister Sarah Jones also welcomed the decision enthusiastically, stating that “there can be no true freedom if people live in fear of crime in their communities.” According to the minister, a massive investment is planned to roll out facial recognition systems across the entire country. Jones emphasized the often-cited argument that law-abiding citizens have nothing to fear, as their technology is supposed to target only wanted individuals. Although there are countless examples to the contrary from every corner of the world in recent years (see also here, here, here).

Scotland Yard plans to deploy vehicles equipped with real-time facial recognition at selected locations in the capital. The cameras will then scan everyone walking on the street, and the images will be compared against a database of criminals or missing persons. If a face is not found in the database, the system is supposed to delete the image immediately. In the event of a potential match, the system notifies the police. The police are then supposed to verify the match once more before deciding to detain the person.

According to London police, 2,100 arrests have been made using facial recognition technology since the beginning of 2024. Last year, more than three million people passed in front of real-time facial recognition cameras, and 12 false alarms were recorded, none of which led to an arrest.

The U.S. Plans Age Verification at the Operating System Level

Last week, the U.S lawmakers from both parties introduced a joint bill, the “Parents Decide Act” (HR 8250), which would require operating system providers to verify the age of every user. The bill would shift responsibility for age verification from individual apps to operating system providers, according to Biometric Update.

The “Parents Decide Act” was introduced by Representatives Josh Gottheimer and Elise Stefanik. Gottheimer explained that the internet is becoming increasingly dangerous for children, and the issue is no longer limited to social media but also includes artificial intelligence and other platforms that shape children’s thoughts, feelings, and behavior. He argued that safety measures are often lacking, and that children are currently expected to manage their own online safety. However, according to Gottheimer, this is neither realistic nor responsible. Parents, he said, should decide which apps children can download, what content they see, and how they interact online - not the algorithms of tech companies.

Gottheimer also emphasized that the law would establish a consistent standard for all platforms. The phone’s operating system would transmit the restrictions set by a parent to apps and artificial intelligence systems. He argued that this would give parents meaningful control, with the new age verification law working alongside other efforts to promote online safety, including the Sammy Act, the Children’s Online Safety Act, and the Children and Teenagers’ Online Privacy Protection Act.

Under the proposed bill, users would have to provide their date of birth to use an operating system. If a user is under 18, a parent or legal guardian would need to verify their age. Operating system companies would be required to develop systems to process the information necessary for age verification. Under Federal Trade Commission (FTC) privacy and data protection rules, oversight would remain with the Commission, which would be required to issue regulations within 180 days of the law taking effect. These rules would address shared devices, parental controls, and data protection to ensure that date-of-birth data is collected securely and misuse is prevented. The FTC would also be required to report to Congress on rulemaking progress and how companies are complying with the new law.

Tech Companies Continue Scanning Private Messages Despite Regulation Expiry

Major tech companies have confirmed that they are still scanning private messages, even though the EU’s temporary regulation requiring them to do so has expired. The European Commission, however, is not saying whether the practice is in line with EU law, but rather is avoiding questions on the matter. Instead, a spokesperson emphasized last week that “proactive behavior by companies is essential to protect children in the EU and elsewhere” and that child protection should not depend on companies’ business decisions, writes EUPerspectives.

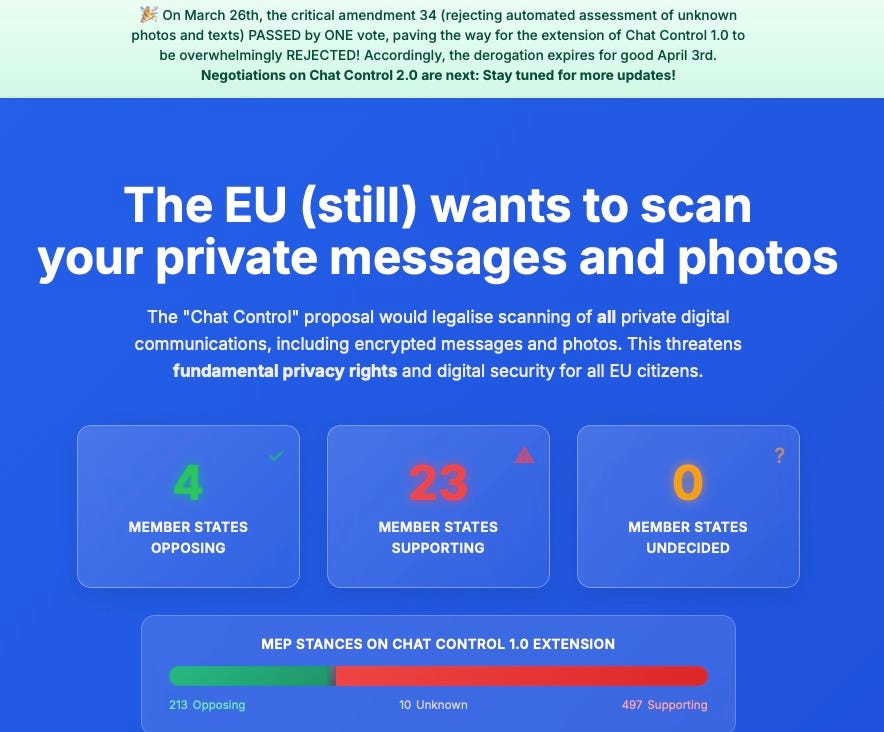

The EU introduced a temporary Chat Control measure in 2021 to combat child sexual abuse material (CSAM) online. This measure was intended to last only until a permanent law was adopted. But the European Parliament voted on March 11 to extend the temporary message monitoring until August 3, 2027, with the condition that the monitoring be more limited. Parliament demanded targeted detection, a ban on monitoring encrypted messages, and that the measure be applied only to specific suspects or groups. On March 26, Parliament also rejected a last-minute attempt to extend the measure, and it expired on April 3.

Just one day after the measure expired, Google, Meta, Microsoft, and Snap issued a joint statement confirming that they would continue scanning private messages. “As EU institutions continue to negotiate an immediate, interim solution and a durable framework, signatory companies (Google, Meta, Microsoft, and Snap) reaffirm their continued commitment to protecting children and preserving privacy, and will continue to take voluntary action on our relevant Interpersonal Communication Services,” the companies stated in a press release published on April 4. The companies warned that the expiration of the measure could leave children unprotected and criticized EU institutions for inaction, saying they had failed to reach an agreement in time.

Thus, the EU now faces a regulatory gap. The companies claim they are complying with the original requirement, but now on their own terms. The European Commission, however, has not clarified whether such a practice is in line with EU law. At the same time, negotiations are continuing in the EU on the next version of message control (“Chat Control 2.0”), which would replace the temporary measure with a permanent one.

According to the website Fightchatcontrol.eu, six countries opposed message control last November. By now, the number of opposing countries has dropped to four: the Czech Republic, Italy, the Netherlands, and Poland. Austria, Belgium, and Finland, which previously opposed the measure, appear to have joined the ranks of supporters. Countries that had not yet decided in November - such as Germany, Greece, Romania, Luxembourg, and Slovenia - also now appear to support the measure.

HR 8250 should be named the "Government Decides Act."