US House Judiciary Committee: European Union Implements Widespread Censorship

For ten years, the European Commission has been organizing censorship campaigns around the world.

The Committee on the Judiciary of the U.S. House of Representatives has published a report “The Foreign Censorship Threat, Part II: Europe’s Decade-Long Campaign to Censor the Internet Globally and Its Harmful Impact on American Freedom of Speech” on how European Union laws, regulations, and court rulings are forcing companies to implement censorship around the world.

The Committee bases its report largely on internal documents from technology companies, minutes of secret meetings, emails, etc., which the companies have submitted in response to subpoenas or the Committee, and it concludes that the European Commission (EC) has been organizing censorship campaigns around the world for at least ten years. According to it, the EC has successfully pressured major social media platforms to change their content moderation rules, including by removing “inappropriate” content. According to the U.S. House Judiciary Committee, the European Commission often considers truth and political speech to be “inappropriate” content and refers to its actions as a fight against “hate speech” and “disinformation.” This is particularly striking in the context of some of the most important political debates of recent years, such as the COVID-19 pandemic, immigration, and transgender ideology. In doing so, the EC pays disproportionate attention to conservative content and interferes in elections in both Europe and the US.

European Censorship Began to Sprout 10 Years Ago

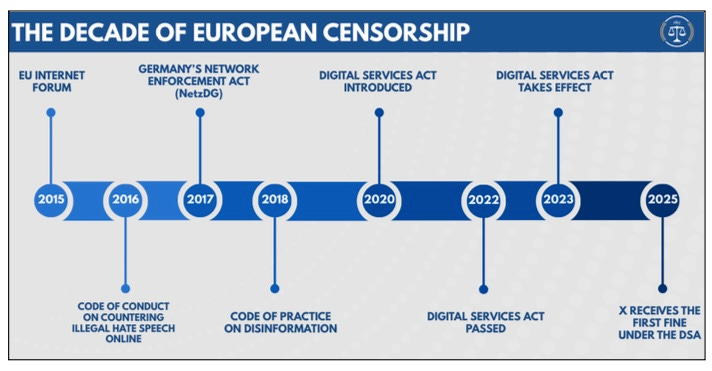

The Committee estimates that European censorship campaigns began in the mid-2010s, when social media became increasingly important in political debate. From the outset, senior EU leaders envisioned a sweeping digital censorship law that would give the European Commission complete control over online discourse. “Hate speech” and “misinformation” became labels of sorts that European agencies used against political discourse they disagreed with or perceived as threatening to their power. Around the same time, European concerns about so-called hate speech were fuelled by the wave of immigration that swept across the continent, sparking new political debates about multiculturalism, assimilation, and the threat of terrorism.

According to the Committee on the Judiciary, the first significant milestone in the fight against hate speech and misinformation was in 2015, when the Commission established the European Internet Forum (EUIF). The primary goal of the forum is to “address the misuse of the internet for terrorism,” but in order to achieve this goal, it has begun to “encourage” platforms to censor legitimate political speech. Thus, by 2023, the European Internet Forum had completed a handbook (related document) that provides technology companies with “recommendations” on how to moderate legitimate, non-violent speech, such as populist rhetoric, political satire, anti-government/anti-EU, anti-elite, anti-immigration, anti-LGBTIQ content, and Islamophobic content, as well as meme subculture. In other words, the EUIF advises platforms on how best to censor so-called borderline content.

Efforts to monitor and moderate European web content continued in the following years. In 2016, the EC adopted a Code of Conduct on Countering Illegal Hate Speech Online, and in 2018, a Code of Practice on Disinformation. According to EC officials, both codes were voluntary for platforms. At the same time, platforms knew that they would have to comply with EU censorship requirements eventually, for otherwise they were to face heavy fines. Therefore, it was less painful to start complying immediately.

Facebook, Instagram, TikTok, and Twitter promised to implement the hate speech code and censor hate speech, which was defined rather vaguely in the code of conduct. In January last year, the European Commission decided to incorporate the hate speech code into the Digital Services Act (DSA), which is mandatory for large online platforms. This means that the hate speech guidelines are now mandatory as well. The platforms also began to follow the disinformation code, which requires large online platforms to reduce the visibility and spread of information considered to be disinformation. From 2022, the code of practice included an obligation for platforms to participate in working groups where online platforms, civil society organizations (CSOs), and European Commission agencies discuss how to censor so-called disinformation. At the working group meetings, the EC pressured platforms to change their content moderation rules and implement additional content censorship measures. Fact-checking, elections, and the demonetization of conservative news outlets were also discussed, and a “consensus” was reached under strong pressure from the EC.

Member States Began To Eagerly Introduce Censorship Laws

At around the same time, EU Member States began introducing their own censorship laws. One of the first was Germany’s 2017 Network Enforcement Act (NetzDG). It obliges social media platforms to assess the legality of content on the basis of the 18 provisions of German criminal law in the event of a complaint. However, critics argue that they include draconian provisions that criminalize ordinary political speech as hate speech (see, for example, here, here, or here). The law requires platforms to remove content deemed illegal within 24 hours and this often creates a risk of excessive blocking, i.e. platforms tend to remove all content, including legal content, to avoid hefty fines. According to Facebook, Germany’s NetzDG has created a regime where, in case of a doubt, it is better to delete the content, contrary to the current Western practice of presuming that all speech is legal, unless proven otherwise.

After the law was passed, German courts soon decided that, under the NetzDG, so-called illegal content should be removed worldwide, because Germans would still be able to view prohibited content using a VPN. The U.S. House Judiciary Committee believes that it was this German idea that sparked the EU’s campaign to censor web content worldwide.

The Digital Services Act as the Culmination of the European Commission’s Censorship Rules

According to the U.S. House Judiciary Committee, the EC treated the Code of Conduct on Countering Illegal Hate Speech Online and the Code of Practice on Disinformation simply as precursors to the Digital Services Act, tightening them up from time to time and trying to make them mandatory. For example, the aim of the more aggressive 2020 Code of Practice on Disinformation was to “support the vaccine strategy by effectively combating disinformation,” as the EC wanted to censor criticism of its COVID-19 vaccine policy. In any case, the European Commission did not intend to wait for the Digital Services Act to be finalized, but emphasized that there was a clear agreement with the platforms and that they had agreed to continue with censorship measures.

In April 2022, the Council of the European Union, and the European Parliament, finally reached an agreement on the Digital Services Act. By that time, the Commission had already publicly announced that the Code of Practice on Disinformation was essentially mandatory and wrote in June that “the Code of Practice on Disinformation will be backed up by the Digital Services Act, which means that companies that don’t comply face fines of up to 6% of global turnover.”

The EC’s much-anticipated Digital Services Act (DSA) was finalized in 2022 and entered into force in 2023. The Committee on the Judiciary of the U.S. House of Representatives called this document a sweeping censorship law already in an interim report published last summer. In its current report, the Committee adds evidence and concludes that the DSA was the culmination of ten years of effort by the European Commission. The regulation finally gave the authorities a law that imposed binding censorship obligations that would silence opposing online discussions in Europe and elsewhere, and provided an opportunity to control information circulating on the internet.

The European Commission Is Pushing for Censorship Around the World

The Committee on the Judiciary of the U.S. House of Representatives states that the EC’s main objective for years has been to regulate the content moderation rules of online platforms. It is precisely these content rules that determine what content is allowed or prohibited on platforms and what is the distribution of both. Platform content rules define the discourse in the public sphere and are therefore important points of pressure for the authorities. This is especially true considering that content control is now largely in the hands of artificial intelligence and other automated tools, and the role of humans is becoming increasingly marginal.

However, most major social media platforms are U.S. companies. They apply global content moderation rules (see examples here, here, here, and here). The U.S. House Judiciary Committee considers that global content rules are reasonable because if each country had its own rules, platforms would need to know the exact location of each user, which would pose a considerable risk of data leaks and privacy violations. At the same time, knowing the exact location of users gives the authorities additional leverage to monitor suspects. Moreover, users can hide their location with a virtual private network (VPN), which would therefore not be an effective solution. It would also entail significant costs for the platforms. Therefore, the U.S. House Judiciary Committee believes that the European requirement to adjust content rules also affects U.S. users’ content and is a direct threat to freedom of speech in the United States.

When the EC adopted the DSA in 2022, pressure on platforms increased further, and since then, the EC’s warnings have been accompanied by the threat of fines of up to 6% of a platform’s global turnover. The regulation requires platforms to identify systemic risks, including misleading or deceptive content; disinformation; any actual or foreseeable negative effects on civil society and elections; and hate speech. Systemic risks may also include information that is not illegal. Platforms must mitigate the risks they identify, or in other words, they have to moderate content that European regulatory authorities deem misleading, deceptive, or hateful. To do this, platforms must continuously review and modify their content moderation rules.

To date, the EC has also imposed its first fine under the DSA: in December 2025, social media platform X was hit with a €120 million penalty for breaches related to transparency obligations, including deceptive design of blue checkmarks, advertising repository issues, and researcher data access. The U.S. House Judiciary Committee considers this to be the most obvious example of retaliation, as X has defended freedom of speech around the world. In any case, the U.S. House Judiciary Committee acknowledges that platforms have never had and still do not have any other choice but to change their content moderation rules and censor content globally.